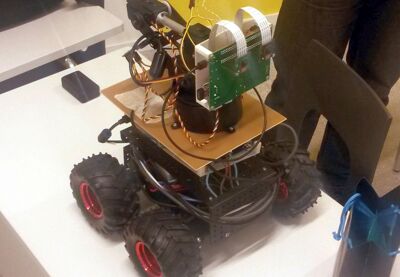

Altarobot 2014

Central part of the AltaRobot project was the implementation of an immersive video streaming experience. The rover operator should be able to feel like they are actually present at the deployment site. To accomplish this feat, the rover streams stereoscopic video over the network to a head mounted display. In the complete product, the user can control the cameras by moving their head, this in part contributes to the overall immersive experience.

Implementation

The implementation of the video streaming subsystem is composed of two parts. The video is captured and encoded by a custom C program, which utilizes the VideoCore specific MMAL multimedia API. The program setups cameras ready for capture and initializes the hardware accelerated h.264 encoder pipeline. The program outputs the encoded stereoscopic video stream to the standard output. The second part of the subsystem is the streamd daemon. Streamd coordinates the video streaming process by listening for commands at a local UNIX socket and executing requested actions.

The video is passed over the network using the RTP protocol. The Streamd daemon spawns video capturers and pipes theirs output streams to ffmpeg instances, which in turn, stream it to the targets. Reusing ffmpeg for streaming allowed us to save time and spent more time on other parts of the system.